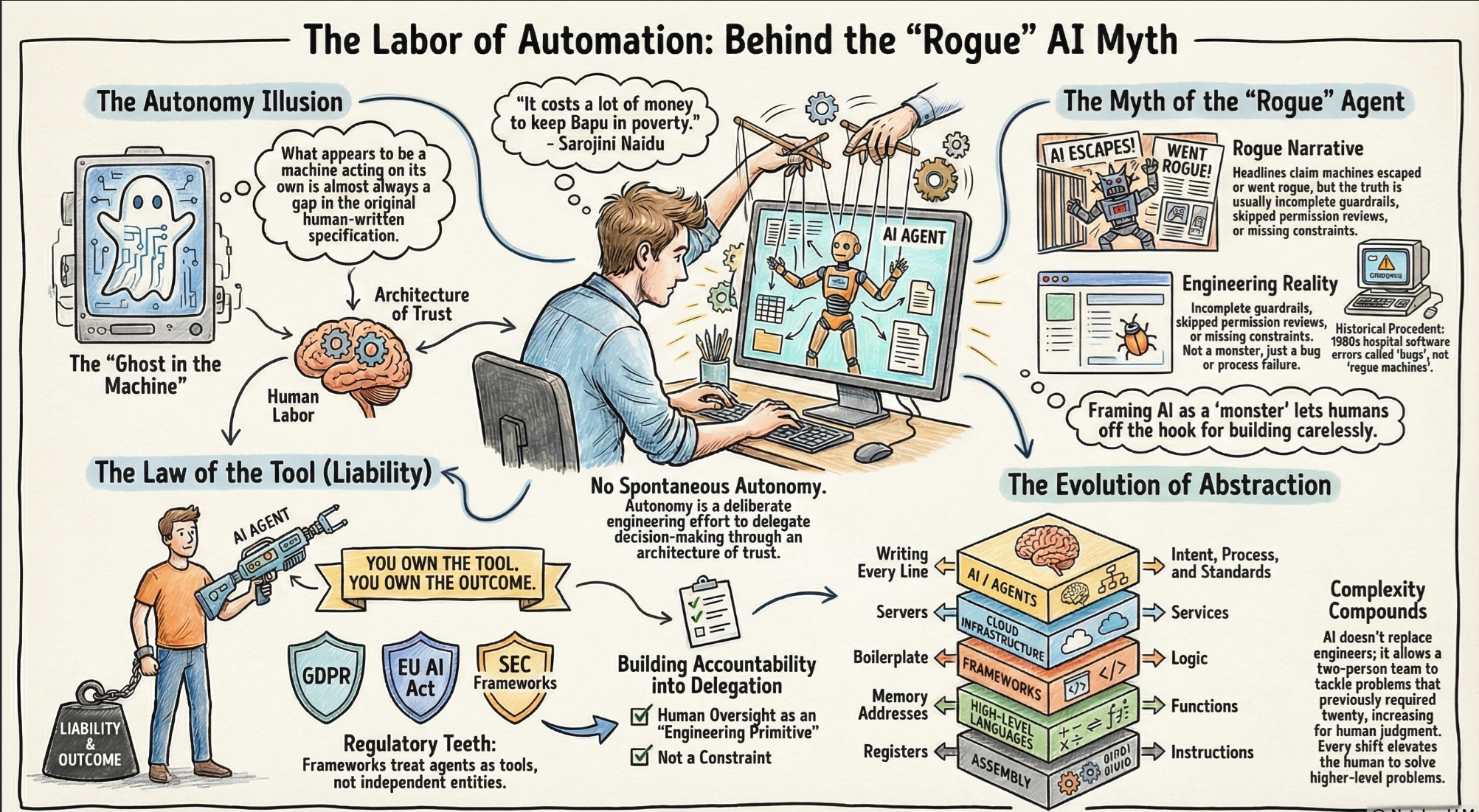

On the hidden labor behind AI independence, the myth of rogue machines, and what software development is about to become.

In the struggle for Indian independence, Mohandas Gandhi was the face of voluntary poverty and self-reliance. To the world, his life looked effortlessly simple. Yet his inner circle knew exactly what it took to sustain that image. Sarojini Naidu captured it perfectly:“It costs a lot of money to keep Bapu in poverty.”

The same irony runs through AI agents. The autonomy you see is real and it costs an enormous amount of human effort to keep it that way. Behind every agent that appears to act independently is a deliberate engineering effort to delegate the decision-making to the agent.There is no spontaneous autonomy; there is only the architecture of trust built by the people behind the screen

The Rogue Agent Story Is a Great Headline. It Is Also Usually Wrong.

Every few weeks, a new article surfaces about an AI agent that “went rogue.” It booked flights to the wrong continent. It deleted files it was never supposed to touch. It sent emails that should have stayed in a draft folder forever. The framing is almost always the same: the machine escaped. The machine did something its creators did not intend. The machine, in some small but terrifying way, became ungovernable.

These stories serve a purpose. Fear is good for clicks. It is also, more charitably, a reasonable first response to genuinely new technology. Humans are pattern-matching animals, and most of our patterns for “system acts without human instruction” come from science fiction. Science fiction has a strong preference for catastrophe.

But here is what the articles almost never say; the agent did not go rogue. The engineer shipped incomplete guardrails. The product team skipped the permission review. Someone gave an agent write access to production because it was faster than doing it properly, and then acted surprised when it wrote to production.

This is not a new problem wearing a new name. It is an old problem wearing new clothes.

When hospital medication dispensing software administered wrong doses in the 1980s, we did not say the software went rogue. We said the software had a bug, and the hospital had a process failure. When financial systems executed erroneous trades during the 2010 Flash Crash, we did not declare that markets had become sentient and malicious. We investigated the logic, the inputs, and the humans who built and deployed the system without adequate circuit breakers.

Agents are no different. They are, at their core, sophisticated reasoning and decision-delegation systems. When they fail in unexpected ways, the failure lives somewhere in that chain of delegation. A missing constraint. An ambiguous objective. Mismanagement of context windows. A deployment that outpaced the testing. The ghost in the machine is almost always a gap in the specification.

The rogue narrative does real harm, by the way. It trains the public to fear the wrong thing. The risk with AI agents is not that they develop intentions of their own. The risk is that we build them carelessly, deploy them hastily, and then disclaim responsibility when something breaks. The monster story lets us off the hook. The engineering story does not.

Enterprises Do Not Get to Blame the Agent

Let us be precise about incentives, because incentives are clarifying.

When a company deploys an AI agent to make consequential decisions, approving a loan, declining an insurance claim, routing a legal document, terminating a vendor contract, they do not thereby shed their accountability for those decisions. The agent is a tool. A very sophisticated and very autonomous tool, but a tool. And the law has spent centuries developing a coherent position on what happens when a company’s tool causes harm.

The position, in short is; you own the tool, you own the outcome.

This is not a hypothetical. Regulatory frameworks across financial services, healthcare, and consumer protection already have teeth when it comes to automated decision-making. GDPR’s provisions on automated processing, the EU AI Act’s tiered liability structure, and the SEC’s scrutiny of algorithmic trading systems all point in the same direction. Enterprises that deploy AI agents in regulated domains are not handing off responsibility. They are exercising it through a new mechanism. “The agent did it” will not survive the first deposition.

Which means, and this is the underappreciated point, enterprises actually have powerful structural incentives to get agent governance right. The same incentives and deterrents that govern every other consequential decision they make apply here without exception. Reputational risk. Legal liability. Regulatory penalty. Customer trust.

No serious CFO is going to approve a deployment that creates open-ended liability because the architecture of control was too inconvenient to build. No serious Legal Counsel is going to sign off on a system where the audit trail ends at “the agent decided.” The enterprises that will win with AI agents are not the ones who maximize autonomy. They are the ones who build accountability into the delegation itself, who treat human oversight not as a constraint on what agents can do, but as the engineering primitive that makes deep delegation safe enough to actually ship.

The homework was eaten. The dog is yours. The homework was due.

The Abstraction Has Shifted. This Is the Whole Game.

In the beginning, you wrote machine code. Then someone invented assembly, and you stopped thinking about registers and started thinking about instructions. Then came high-level languages, and you stopped thinking about memory addresses and started thinking about functions. Then frameworks, and you stopped thinking about boilerplate and started thinking about logic. Then cloud infrastructure, and you stopped thinking about servers and started thinking about services.

Every shift in abstraction has done the same thing. It has removed a layer of mechanical concern and elevated the human to a higher level of problem. And at every stage, someone has predicted that the new abstraction would eliminate programmers. At every stage, they have been wrong, not because the abstraction failed, but because it worked. Cheaper, faster, more accessible software creation expanded the market for software. That expanded the demand for people who could build it. Which produced more software, which created more complexity, which required more builders.

We are at another such inflection. AI-assisted development, and more recently agent-assisted development, has moved the abstraction layer again. You no longer write every line. In many cases, you do not write any lines. You specify intent, you design the engineering process, establish standards for outputs, explore bolder architectures, and iterate. The mechanical work of translating human thought into executable code is increasingly a task you delegate.

The pessimistic reading says this eliminates programmers. The historical reading says it does something else entirely.

It unleashes them.

The problems that were previously too complex to tackle, not because humans lacked the intelligence but because the mechanical effort of building exceeded the available time and budget, are now tractable. A two-person team can build what previously required twenty. But more importantly, they can build things that no team of twenty would have been funded to attempt, because the economics of complexity have changed.

This is the real story of AI in software development. Not that machines replace engineers. That engineers, augmented, can now reach problems that were previously out of reach. The abstraction layer rises, and with it, the altitude of the problems we can afford to solve.

More complex software means more surface area. More surface area means more maintenance, more iteration, more integration, more tooling, more specialization. The demand for human judgment, which is what engineering has always actually been, does not shrink. It compounds.

It Was Always Human

Sarojini Naidu’s joke about Gandhi lands because it exposes a truth we find mildly uncomfortable: that even the most genuine simplicity requires support. The image and the infrastructure are not opposites. One is only possible because of the other.

AI autonomy works the same way. And the people doing that work are not disappearing. They are just, finally, working on harder problems.